We have all been there: you ask ChatGPT to write a Python script, and it returns a functional but unoptimized mess. Or you ask it to refactor a React component, and it hallucinates a library that was deprecated three years ago.

The problem is rarely the model itself; it is almost always the prompt. Large Language Models (LLMs) are probabilistic engines that require precise, architectural instructions to output production-ready code.

Without clear constraints on stack, style, and error handling, even GPT-4 or Claude 3.5 Sonnet will default to generic, beginner-level solutions. In this article, we will explore the best free AI prompt generator to fix this issue and how tools like Vinish.ai are redefining prompt engineering for developers.

Why LLMs Struggle with Coding Requests

To understand why we need generators, we must first understand how LLMs "code." They predict the next token based on billions of lines of public repositories, often prioritizing the most common pattern over the most efficient one.

If you provide a vague command like "Create a dashboard component," the AI defaults to a basic HTML structure without styling or state management. This results in code that requires heavy refactoring before it can be merged.

To make an LLM write senior-level code, you need to "box it in." You must define the tech stack, set performance constraints, and strictly define the input/output schema.

Introducing Vinish.ai: The Best Free AI Prompt Generator

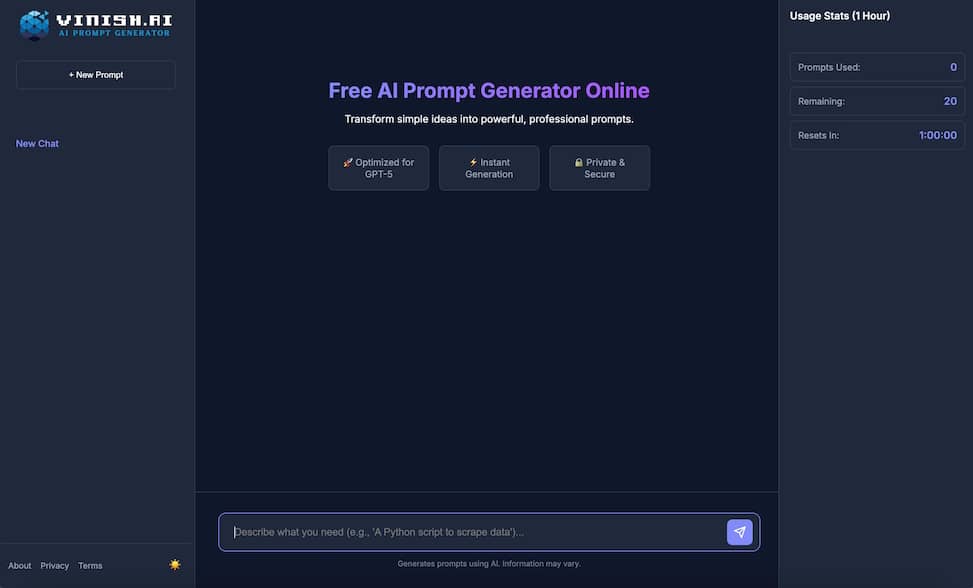

Vinish.ai is a specialized, AI prompt generator designed to bridge the gap between developer intent and machine execution. Unlike generic tools, this generator architects a prompt based on software engineering principles.

Context Injection and Persona Definition

The core strength of Vinish.ai lies in its ability to inject deep technical context. When you input a coding task, the generator automatically assigns a specific expert persona to the AI.

Instead of a generic assistant, the AI becomes a "Senior Python Architect" or a "Rust Systems Engineer with focus on concurrency." This forces the model to adopt best practices, error handling patterns, and architectural standards suitable for your domain.

Enforcing Output Structure

One of the biggest frustrations for developers is getting chatty responses instead of raw code. You might ask for a JSON configuration and get three paragraphs explaining what JSON is.

Vinish.ai solves this by appending strict output formatting rules to every prompt. It explicitly instructs the model to "return only the code block," "include comments for complexity analysis," or "format as a unified diff," ensuring you get copy-paste ready code.

Handling "Negative Constraints"

Telling an AI what not to do is vital for preventing technical debt. Vinish.ai includes negative constraints to prevent common anti-patterns, such as "do not use deprecated libraries like request" or "avoid global variables."

These guardrails significantly reduce the debugging time required after the AI generates its solution. It turns a rough prototype into a production-candidate snippet in a single shot.

Building Your AI Arsenal for 2026

While an AI prompt generator is a critical utility, the developer tools landscape is expanding rapidly. To stay competitive, you need to be aware of the broader ecosystem of coding assistants.

You can find more such tools to enhance your coding workflow in 2026 by exploring curated directories. For the latest innovations, stay updated with AI Parabellum, a comprehensive AI tools directory.

AI Parabellum serves as a radar for emerging dev-tools. Whether you need autonomous debugging agents, vector databases for RAG, or specialized fine-tuning platforms, referencing a trusted directory ensures you aren't coding with obsolete methods.

How to Use the Vinish.ai Prompt Generator Effectively

Getting the most out of Vinish.ai requires a slight shift in how you approach your initial technical requirements. Here is a workflow to maximize code quality.

Step 1: Define the Technical Intent

Start with a specific architectural goal. Instead of typing "help me with SQL," type "I need a PostgreSQL query to aggregate user sessions by region, optimized for a table with 10 million rows."

The more specific your constraints on scale and performance, the more precise the engineered prompt will be. Vinish.ai takes this seed and expands it into a comprehensive technical specification.

Step 2: Iterate and Refactor

Copy the generated prompt into your IDE's chat assistant or browser. If the code isn't perfect, don't rewrite the code manually; refine the prompt.

Use the structure provided by Vinish.ai to tweak the constraints. Perhaps you need to add "use async/await pattern" or "implement error logging middleware"; simply adjust the prompt and regenerate.

The Future of AI-Assisted Programming

As we move toward 2026, the ability to "program via prompt" will become a fundamental literacy for engineers. Coding will evolve from syntax generation to system orchestration.

Tools like Vinish.ai are the training wheels for this future. They teach you the structure of a precise technical specification, helping you internalize the logic of AI collaboration.

Eventually, you will find yourself structuring requirements better naturally. But until then, having a generator that enforces engineering rigor is a massive productivity booster.

Conclusion: Stop Debugging Bad AI Code

There is no reason to settle for buggy or insecure AI responses in an era of advanced models. By using a dedicated prompt generator like Vinish.ai, you force the LLM to operate at the level of a Senior Engineer.

It transforms the frustration of "lazy AI" into the satisfaction of a reliable, automated coding partner. Remember to keep your toolkit sharp by visiting AI tools directory like AI Parabellum to discover what is next on the horizon.

Mastering the prompt is mastering the codebase. Start generating better instructions today and watch your deployment velocity soar.