Why accessibility testing belongs in your build process, not your backlog

Most development teams treat accessibility the same way they treat technical debt: something to address later, once the real work is done. Later tends to arrive as a legal complaint or a client audit, and neither is a good moment to discover that your navigation is keyboard-inaccessible or half your form labels are missing.

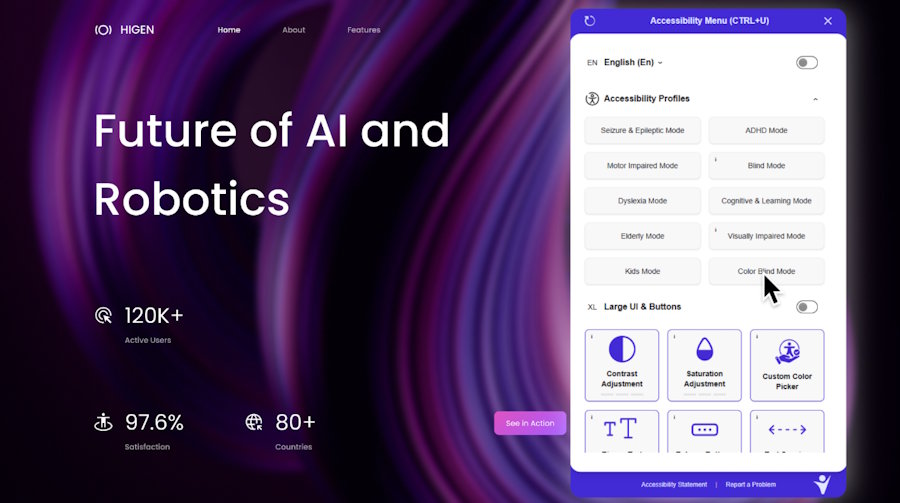

Accessibility problems come from every layer of a project. Design decisions that ignore contrast. Development choices that skip semantic HTML. Content updates that ship without alt text. They compound quietly across every release. Accessibility monitoring surfaces them continuously and suggests fixes where they can be applied automatically, so teams are working through a list rather than discovering it all at once.

Why accessibility keeps failing the same way

WebAIM's annual scan of the top 1 million home pages finds the same violations year after year. Low contrast text, missing image descriptions, empty links, missing form labels, and missing document language account for the majority of failures detected. Not obscure edge cases. The output of normal project work done without accessibility in scope.

These failures share a common trait: none of them are visible to someone building and testing their own work on a modern monitor in a well-lit room. A contrast ratio of 3.2:1 looks fine until you check it against the 4.5:1 threshold WCAG 2.2 Level AA requires. A button with no accessible name looks fine until a screen reader announces it as "button" with no further context. A form with placeholder text instead of labels looks fine until the user starts typing and the label disappears.

Standard code review is not designed to catch this. It is not what code review is for. Code review checks logic, performance, and architecture. It does not simulate the experience of a user who cannot see the screen, cannot use a mouse, or relies on a screen reader to navigate. Those gaps only become visible when you specifically look for them, with tools and processes designed for that purpose.

The deeper issue is that accessibility problems are not random. They cluster around the same decisions made the same way across thousands of projects. Placeholder text used instead of a label because it looks cleaner. An icon button shipped without an aria-label because the designer assumed the icon was self-explanatory. A colour combination signed off in a design review because everyone in the room had normal vision and a calibrated display. These are not mistakes. They are the predictable output of a process that never made accessibility a requirement.

What WCAG 2.2 demands from a development team

WCAG 2.2 organises accessibility requirements into four principles: Perceivable, Operable, Understandable, and Robust. Each contains success criteria at three conformance levels. Level AA is the threshold that matters for most legal frameworks. ADA Title III, EN 301 549, and the UK Equality Act 2010 all reference WCAG AA as the standard against which compliance is measured.

For a development team, the criteria that generate the most failures in practice are not the obscure ones. They are the foundational ones that touch every page of every project.

Contrast requirements under 1.4.3 mean that the text colour and background colour on every element must meet a minimum ratio. 4.5:1 for normal text. 3:1 for large text. This applies to body copy, labels, placeholders, button text, link text, and anything else a user needs to read. It applies in every state: default, hover, focus, disabled, error.

Labelling requirements under 4.1.2 mean that every interactive element must have an accessible name that describes its purpose. Every input. Every button. Every link. Every custom component. The accessible name is what a screen reader announces. If it is absent or meaningless, the user has no way to know what the element does or where it goes.

Keyboard accessibility under 2.1.1 means that every task a mouse user can complete must also be completable using only a keyboard. This is not just about adding tabindex. It is about focus order, focus management in dynamic components, keyboard interaction patterns for custom widgets, and making sure that nothing in the interface requires a hover state to access.

Non-text contrast under 1.4.11 extends the contrast requirement beyond text to the visual boundaries of interactive elements. The border of a text input. The outline of a checkbox. The track of a toggle. All of these must meet a 3:1 ratio against their adjacent colours. This is one of the most commonly overlooked criteria in the standard and one of the most commonly failed.

The cost of finding accessibility issues late

An unlabelled button caught during development takes seconds to fix. The same button found during a site-wide audit six months after launch means identifying every instance across the codebase, fixing each one, retesting, and documenting the change. Multiply that across the violations a typical site carries and the remediation cost becomes significant.

The pattern repeats every time a site update ships without accessibility in scope. A new plugin, a CMS template change, a content update that introduces an image without alt text: each one adds to the total without anyone noticing until the next audit. By that point the developers who wrote the original code may have moved on, the design rationale for specific colour choices is forgotten, and the fixes require archaeology as much as engineering.

There is also a compounding effect that rarely gets discussed. Accessibility problems interact with each other. A missing label on a form input is one violation. The same input with insufficient contrast on the label text, no visible focus indicator, and an error message that is not announced to screen readers is four violations on a single element. Each one traces back to a separate decision made at a separate point in the project. Fixing them in isolation after launch is slower and more expensive than building them correctly in the first place.

Welcoming Web's continuous accessibility monitoring catches regressions at the point they are introduced, not six months afterward when the trail has gone cold. Where issues can be resolved using AI-assisted remediation, suggested fixes are surfaced in the platform for teams to review and apply.

How the legal landscape is changing

Accessibility legislation is not static. ADA Title III web accessibility lawsuits have grown steadily year on year, and the trend shows no sign of reversing. The European Accessibility Act began phased enforcement in June 2025, extending WCAG-based requirements to any organisation serving EU customers regardless of where they are based. The UK Public Sector Bodies Accessibility Regulations have been in force since 2018 and apply to all public sector websites and mobile applications.

The direction of travel is consistent across every major jurisdiction: accessibility requirements are expanding, enforcement is increasing, and the organisations caught unprepared are not always the ones you would expect. Small and mid-size businesses, startups, and agencies working on client projects are all within scope. The assumption that accessibility enforcement only targets large enterprises is no longer accurate.

The organisations that handle accessibility complaints best are not always the ones with perfect sites. They are the ones who can show a dated record of scans run, issues found, and issues resolved. That record does not build itself in a compliance sprint. It builds across every release, one update at a time. And it starts in the build process, not in the backlog.